tag > Generative

-

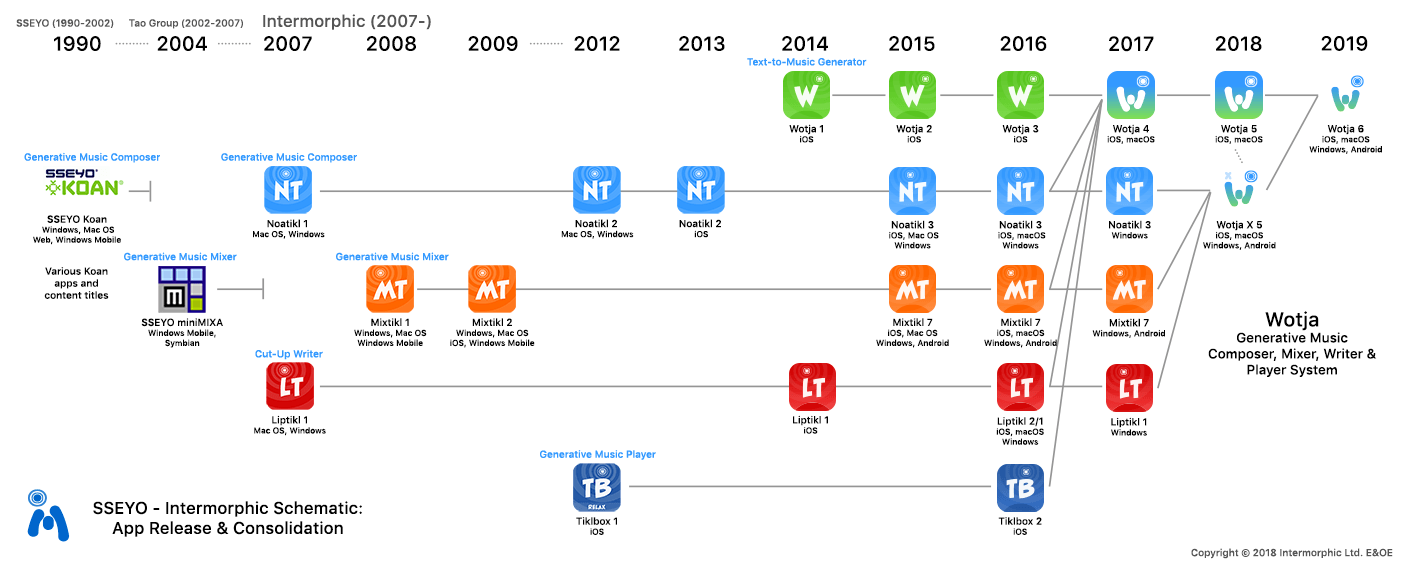

Visual history of Intermorphic's involvement in #Generative #Music over almost 30 years:

http://cdm.link/newswires/intermorphic-gives-us-visual-history-involvement-generative-music-almost-30-years/

-

The Surprising Creativity of Digital Evolution: A Collection of Anecdotes from the Evolutionary Computation and Artificial Life Research Communities: https://arxiv.org/abs/1803.03453

-

Grammatical Evolution:

Grammar induction: https://en.wikipedia.org/wiki/Grammar_induction

PonyGE2: https://towardsdatascience.com/introduction-to-ponyge2-for-grammatical-evol..

PyNeurGen: http://pyneurgen.sourceforge.net/index.html

PySwarms: https://pyswarms.readthedocs.io/en/latest/index.html

PyGMO: esa.github.io/pygmo/

Learning Context-Free Grammars: Grammars-by-Extended-Inductive-CYK-Algorithm.pdfStructural Engineering Optimisation In Grammatical Evolution:

Grammatical Evolution of Behaviour Trees for Mario AI:

-

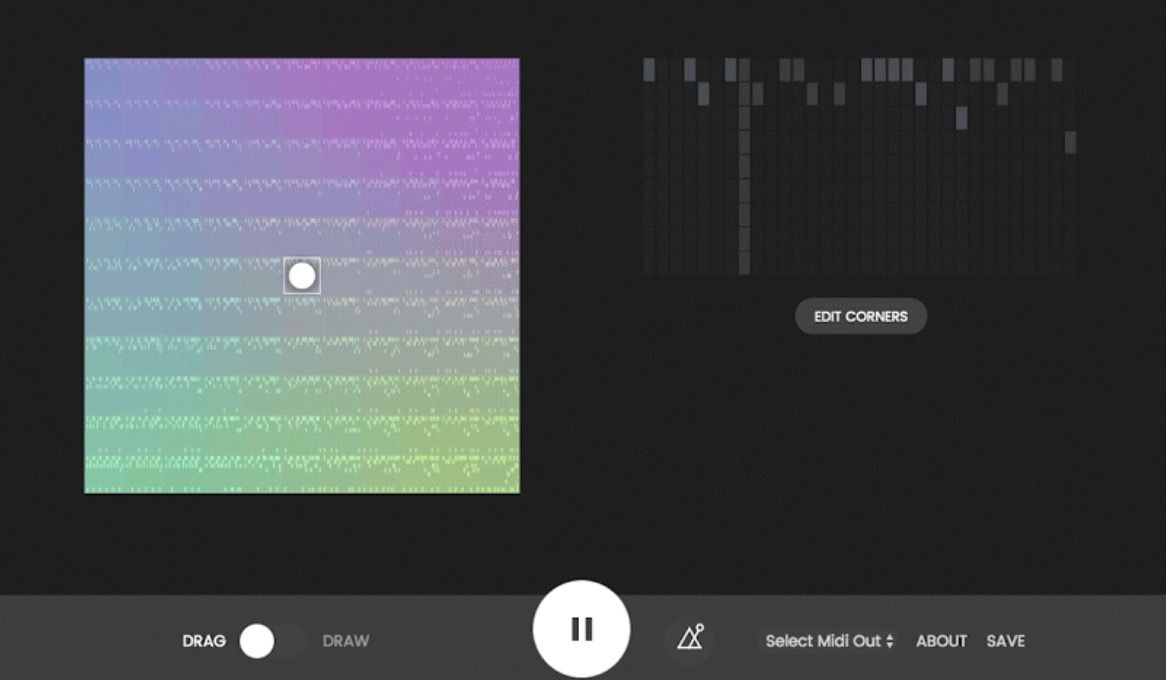

Beat-Blender uses machine learning to create music with interactive latent spaces. Built with deeplearnjs + Magenta: https://experiments.withgoogle.com/ai/beat-blender

-

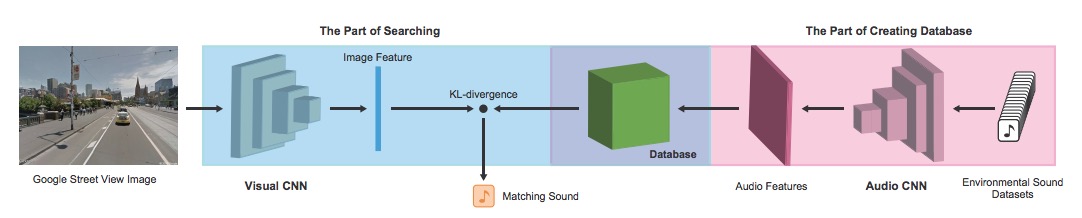

Upload a image, the network generates a fitting natural soundscape:

Demo: http://imaginarysoundscape2.qosmo.jp/

-

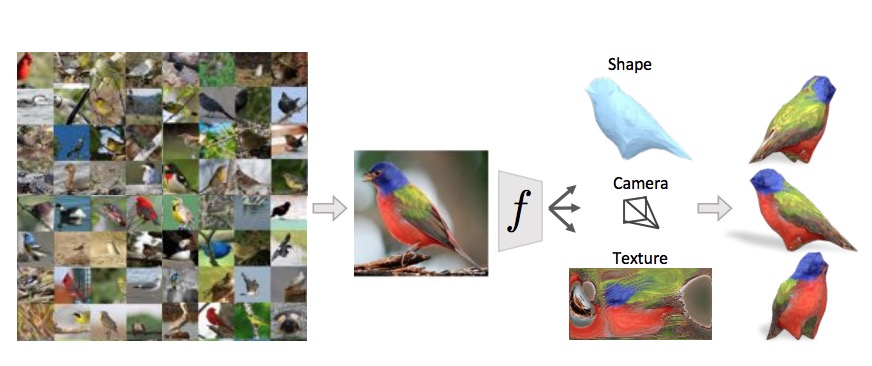

"Learning Category-Specific Mesh Reconstruction from Image Collections": https://akanazawa.github.io/cmr/ https://arxiv.org/pdf/1803.07549.pdf #ML #Generative

-

A Syntactic Neural Model for General-Purpose Code Generation:

https://arxiv.org/abs/1704.01696 #ML #Generative -

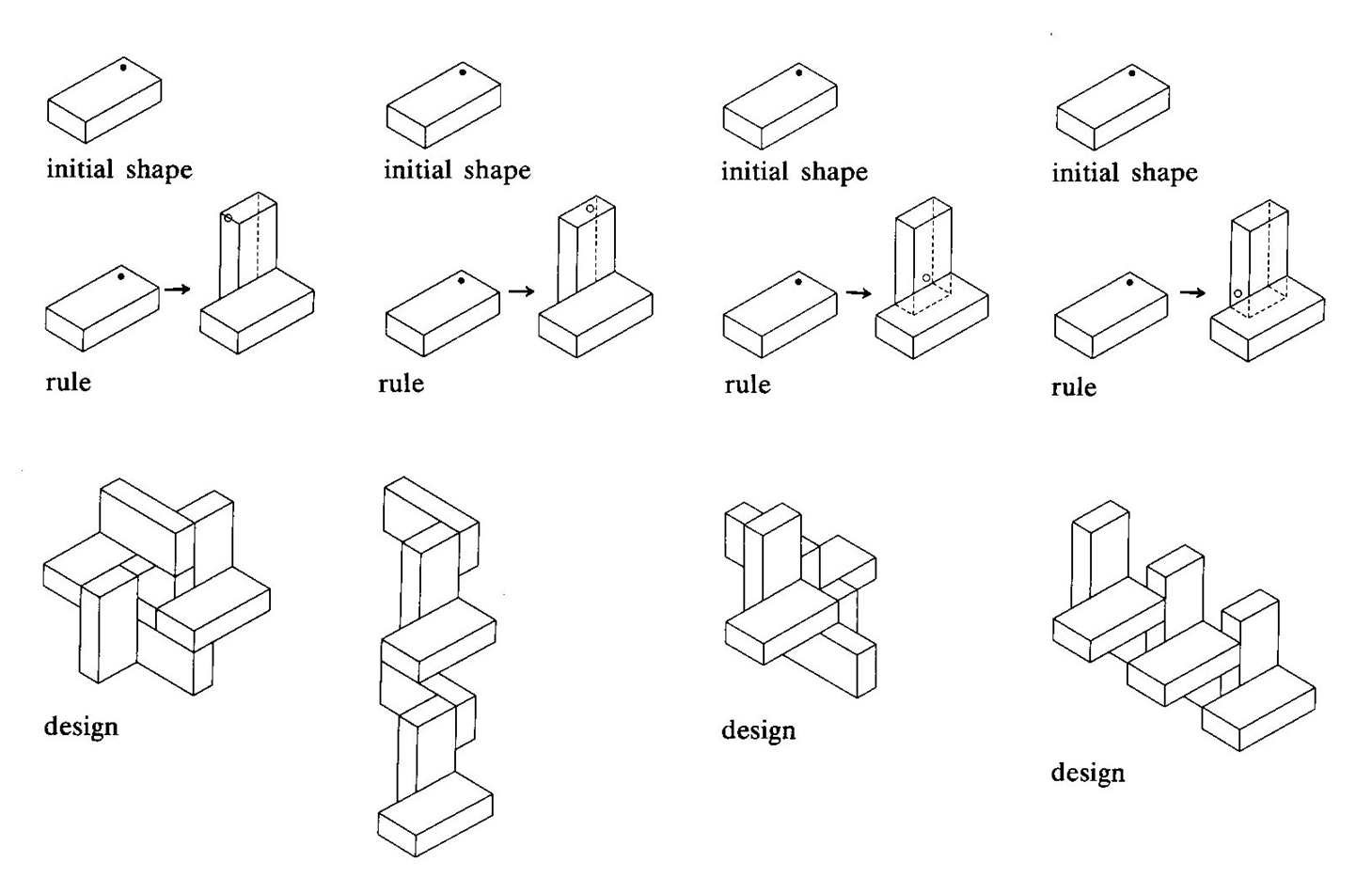

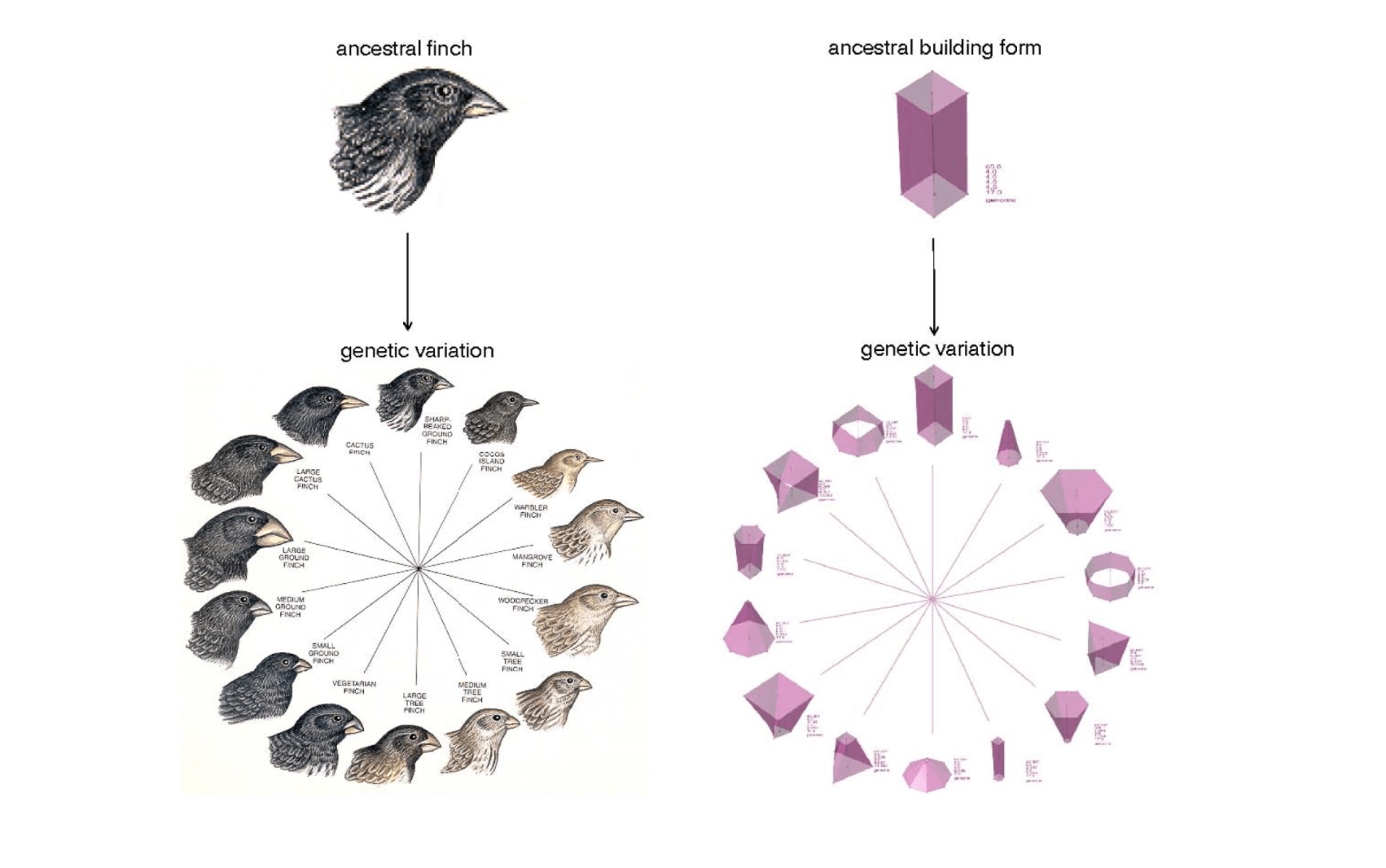

Presentation by John Frazer on "Computational Design":

About the talk: In the talk Frazer describes the monumental problems we face globally that architecture could address, the power computational design possesses, and the tragedy that the later is not employed to address the former. The computational compression of space and time, virtual prototyping, and direct control over robotic fabrication all have the potential of massive global issues. Frazer describes the robust capabilities of cellular automata, genetic algorithms, and evolutionary algorithms.

About the speaker: John Frazer is the godfather of algorithmic and evolutionary design in architecture. Frazer taught at the Architectural Association in London, Cambridge University, and the University of Ulster. He is the former head of the School of Design at Hong Kong Polytechnic and the Queensland University of Technology.

Read more: http://www.interactivearchitecture.org/the-generator-project.html

-

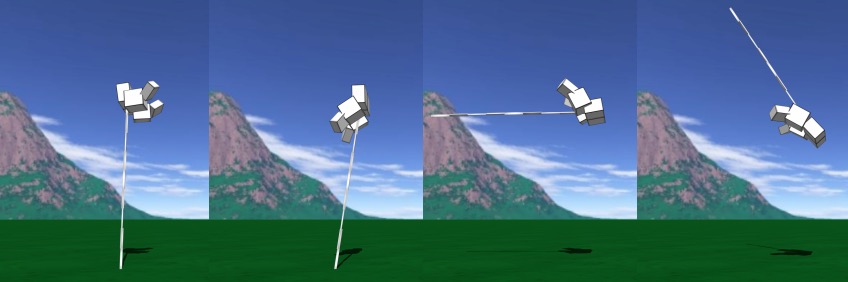

The interactive evolutionary algorithm in Nintendo Wii Mii Creator:

#ML #Evolution #Generative #HCI

"The Nintendo Wii Mii Creator application works either by manual editing of face and body features, or by an interactive evolutionary algorithm (Takagi, 2001, "Interactive Evolutionary Computation: Fusion of the Capabilities of {EC} Optimization and Human Evaluation"; Dawkins, 1986, "The Blind Watchmaker)), shown here. The evolutionary algorithm is accessed by choosing "Start from a lookalike". The user is presented with a large random population of faces, and chooses a favourite from them. A new (smaller) population of faces is created by the system, by mutating the current face (random changes to the face's features). Then the user chooses again, and this process loops. Gradually the user explores "face space" (Caldwell and Johnston, 1991, "Tracking a criminal suspect through face-space with a genetic algorithm") and hopefully finds the desired face."

-

"Gloomy Sunday" - Latest addition to Memo Akten’s ‘Learning to See’ project uses a neural network based realtime image translation model, to generate visual poetry.

-

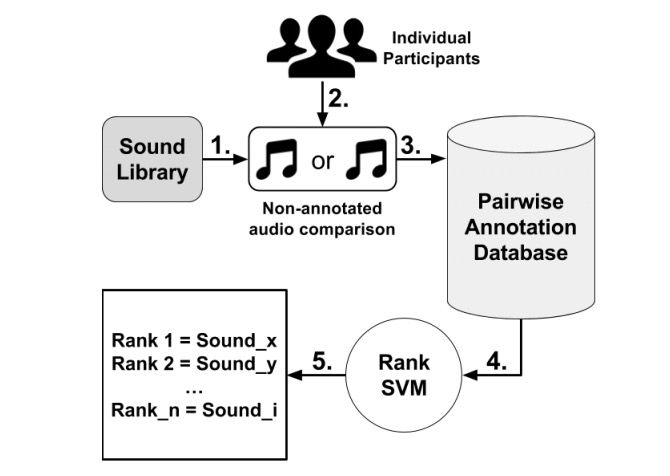

"Modelling Affect for Horror Soundscapes": #ML #Generative #Music #Emotion http://antoniosliapis.com/papers/modelling_affect_for_horror_soundscapes.pdf

Abstract: "The feeling of horror within movies or games relies on the audience’s perception of a tense atmosphere — often achieved through sound accompanied by the on-screen drama — guiding its emotional experience throughout the scene or game-play sequence. These progressions are often crafted through an a priori knowledge of how a scene or game-play sequence will playout, and the intended emotional patterns a game director wants to transmit. The appropriate design of sound becomes even more challenging once the scenery and the general context is autonomously generated by an algorithm. Towards realizing sound-based affective interaction in games this paper explores the creation of computational models capable of ranking short audio pieces based on crowdsourced annotations of tension, arousal and valence. Affect models are trained via preference learning on over a thousand annotations with the use of support vector machines, whose inputs are low-level features extracted from the audio assets of a comprehensive sound library. The models constructed in this work are able to predict the tension, arousal and valence elicited by sound, respectively, with an accuracy of approximately 65%, 66% and 72%."

-

"EvoFashion: Customising Fashion Through Evolution" #ML #Generative

-

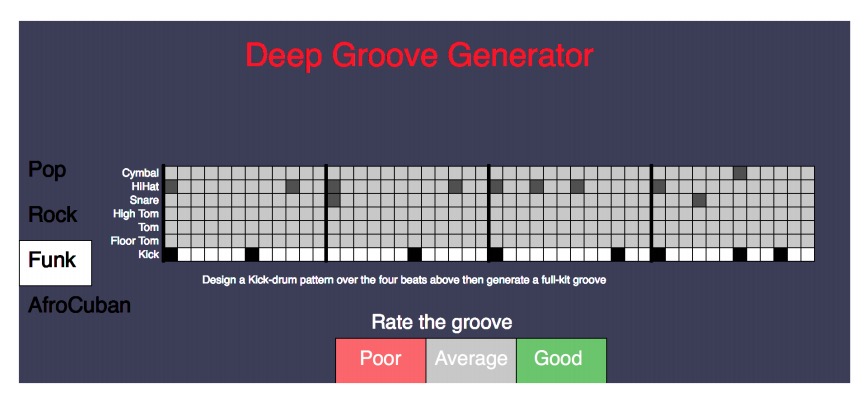

"Talking Drums: Generating drum grooves with neural networks": https://arxiv.org/pdf/1706.09558.pdf #ML #Generative #Music

-

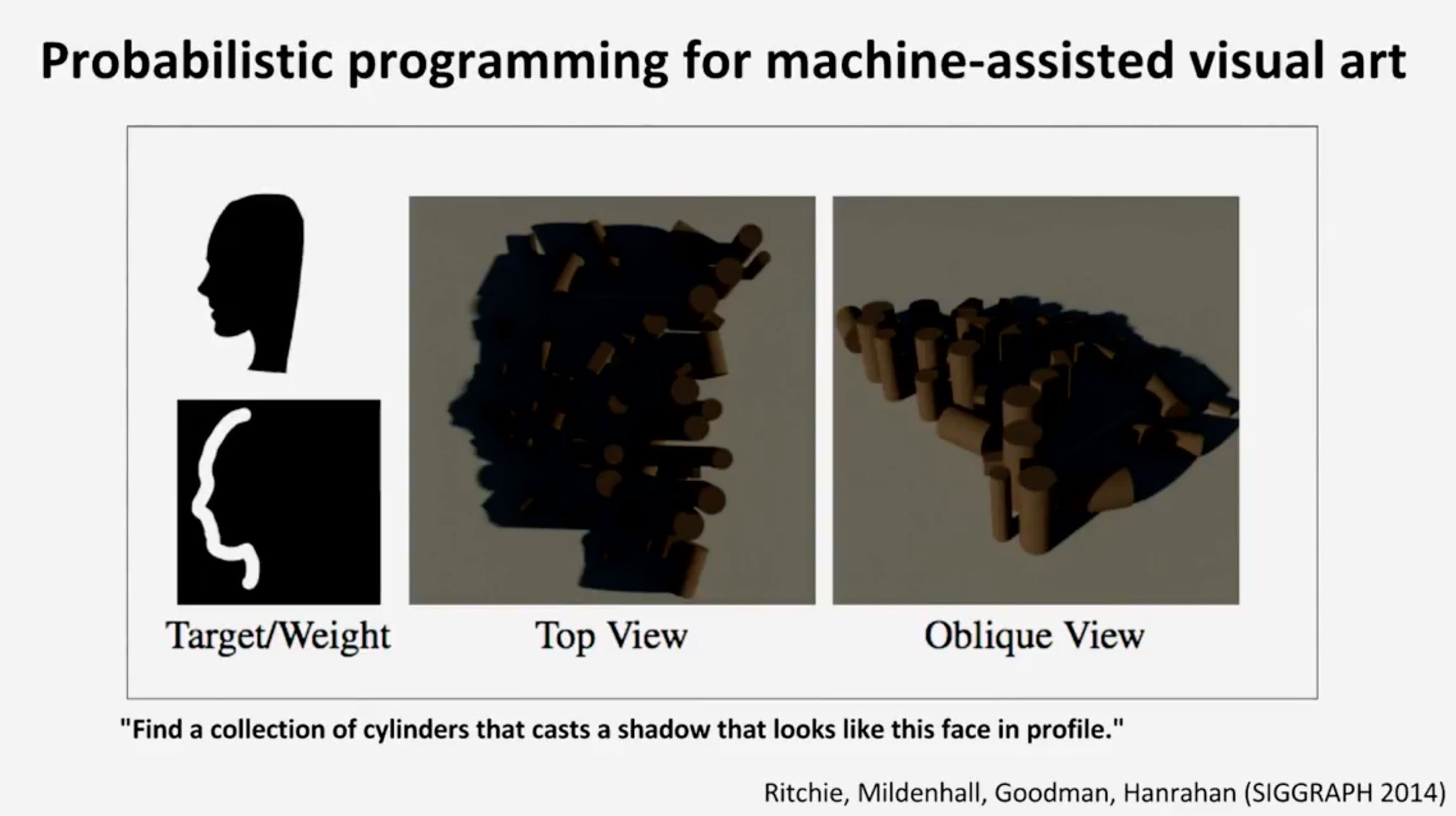

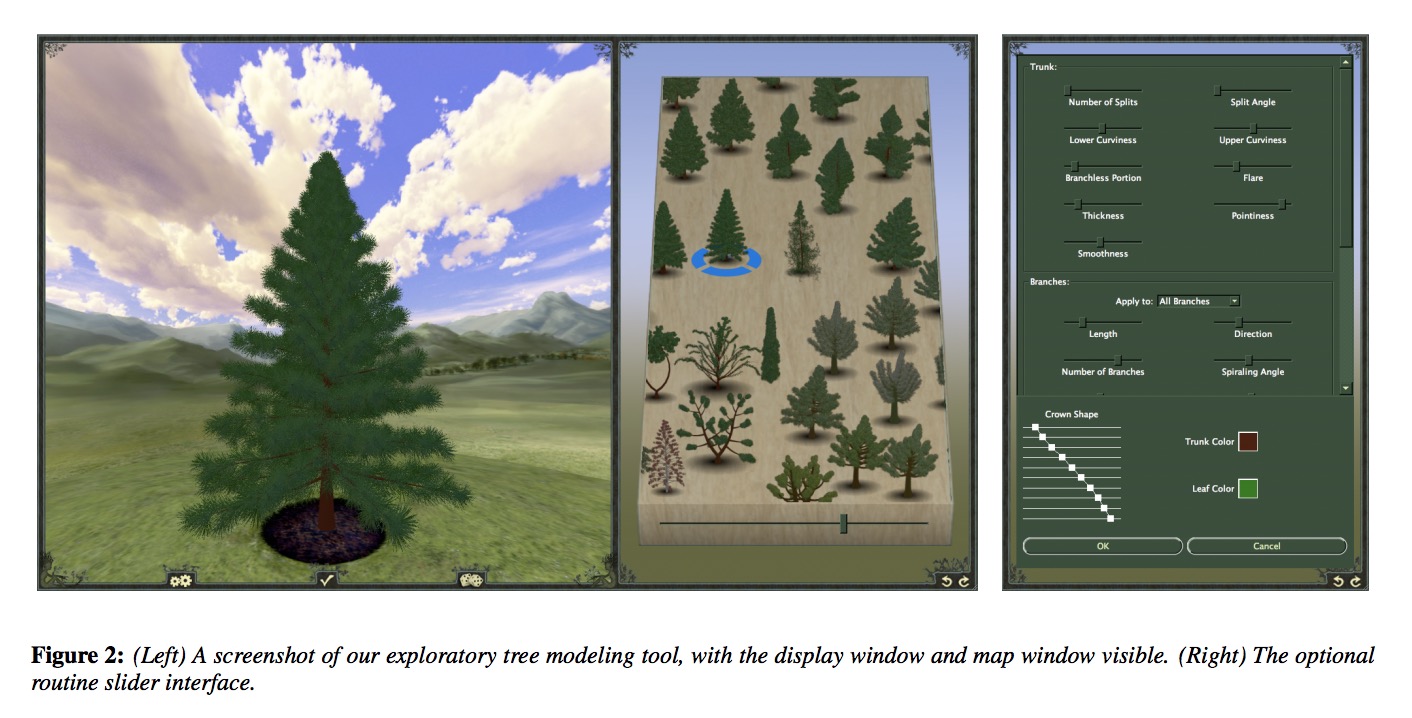

Exploratory Modeling with Collaborative Design Spaces:

graphics.stanford.edu/~lfyg/cds.pdf #ML #Generative

-

What an ‘infinite’ AI-generated podcast can tell us about the future of entertainment:

‘It is a universe that is accessible only to you. No one has beheld it before and no one will behold it again’

-

#Prediction: In 2018 someone will start a new porn site, which 100% focuses on "fake" videos, generated with machine learning. It will be the most popular adult site online by 2021. #ML #Culture #Generative