AI rivals average human competitive coder?

A journalist asked me to comment on the story "DeepMind AI rivals average human competitive coder". My comments ended up in the CNBC story "Machines are getting better at writing their own code. But human-level is ‘light years away’". Here are my comments in full length:

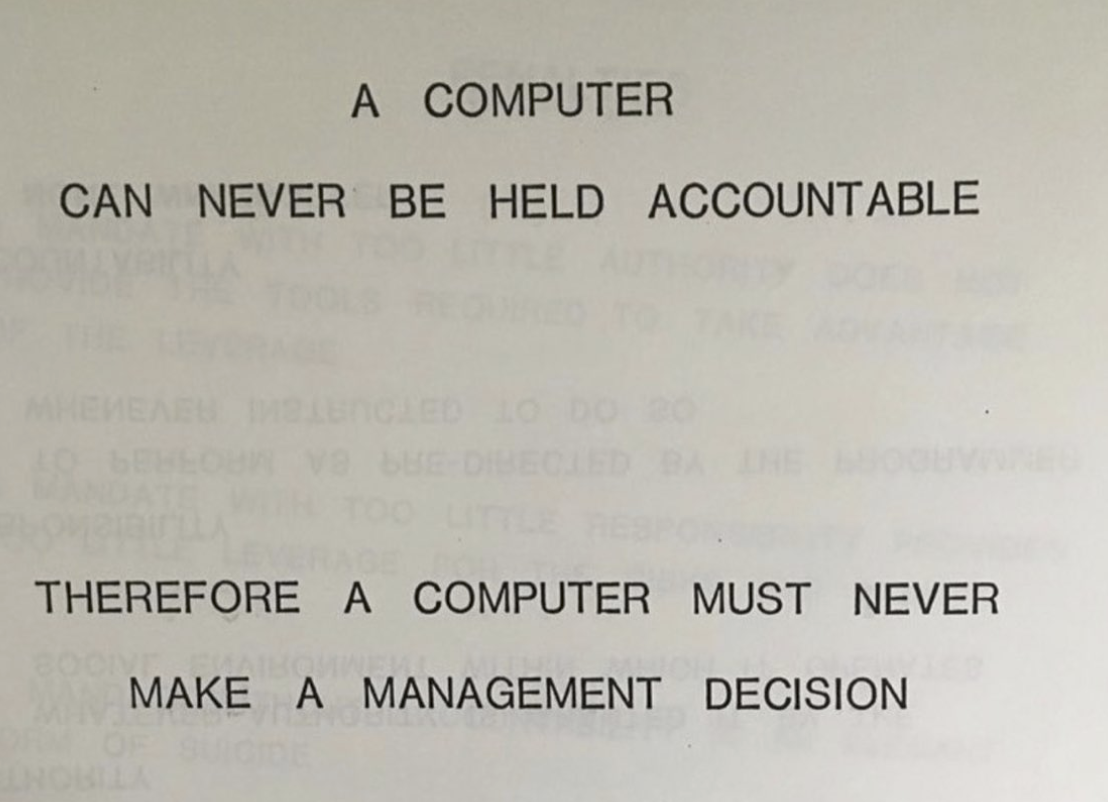

Every good computer programmer knows, that it is essentially impossible to create "perfect code" and that all programs are flawed and will eventually fail in unforeseeable ways, due to Hacks, Bugs or Complexity. Hence, computer programming in most critical contexts is fundamentally about building "fail safe" systems that are "accountable". In a 1979 presentation, IBM made the statement: "A computer can never be held accountable. Therefore a computer must never make a management decision". Besides all the recent hype around "AI Coder outperforming humans", the question of the accountability of code remains largely ignored. Has anything changed since IBM made that statement? Do we really want hyper-complex, in-transparent, non-introspectable, so called "autonomous" systems that are essentially incomprehensibly to most and unaccountable to all, to run our critical infrastructure, such as the finance system, food supply chain, Nuclear power plants, weapon systems or space ships?

#ML #Augmentation #Complexity #InfoSec #Robot #Systems #Comment