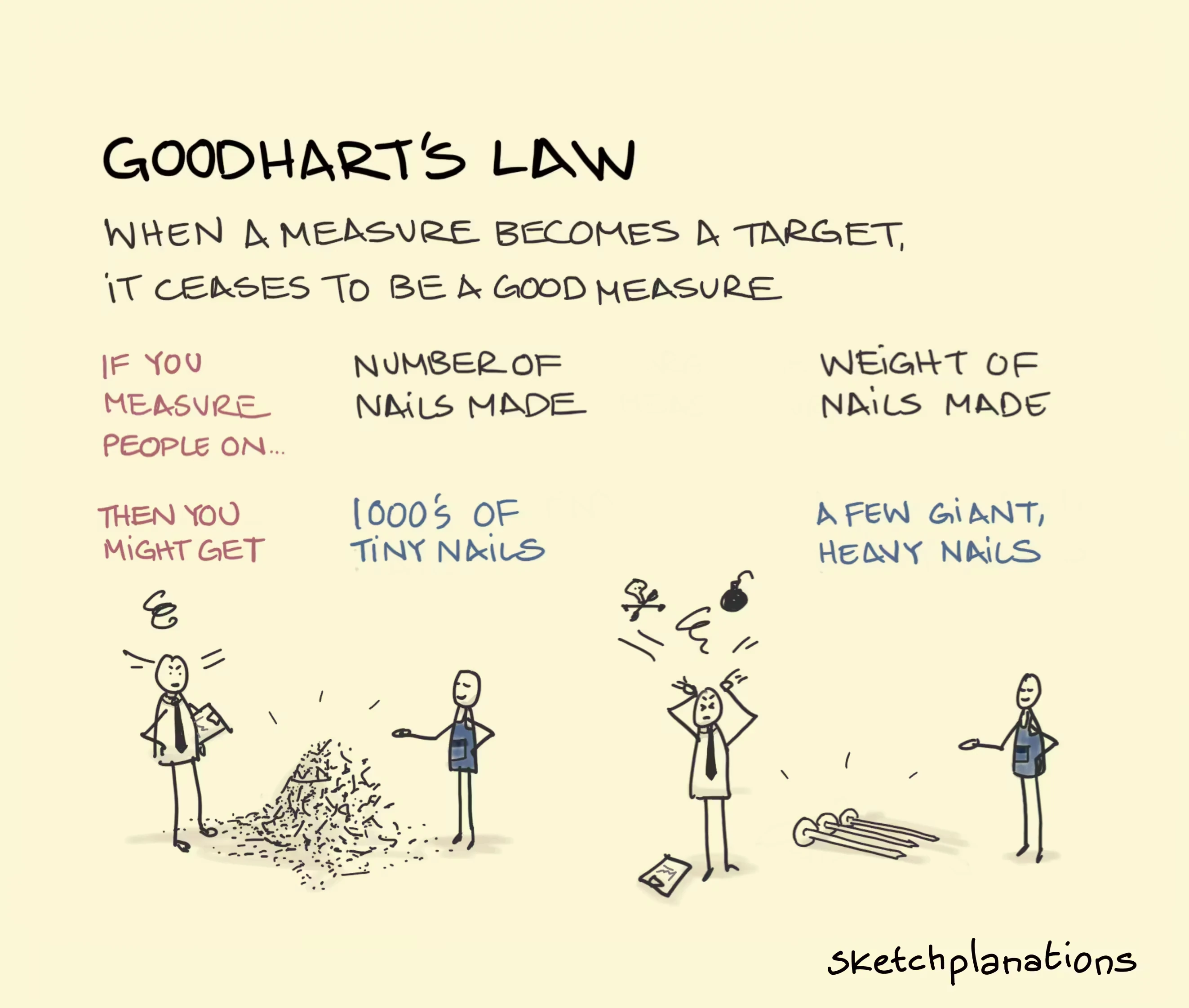

Goodhart's Law

Goodhart's Law states that when a measure becomes a target, it ceases to be a good measure. Coined by economist Charles Goodhart, it highlights that using proxy metrics to manage systems often leads to manipulation or unintended consequences, as people optimize for the metric rather than the actual goal.