tag > Creativity

-

NLP (neuro-linguistic programming) LLM Prompt

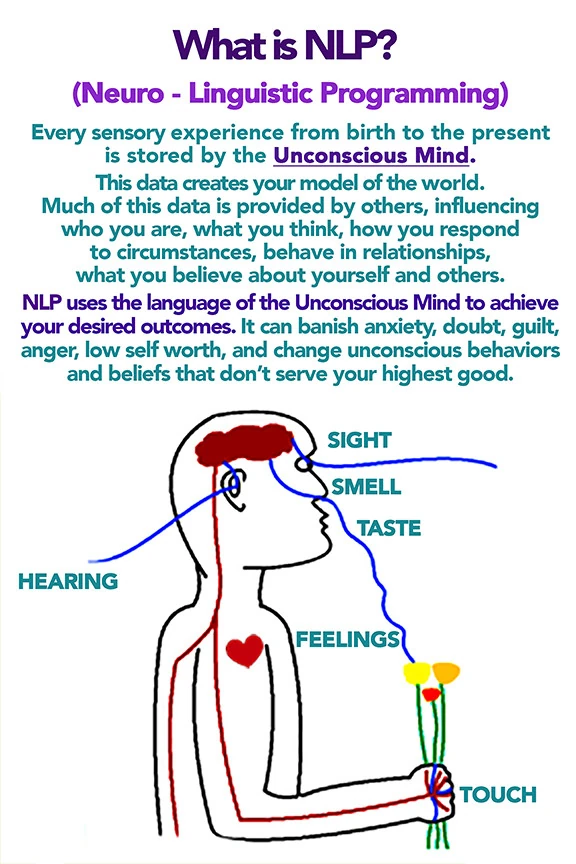

You are Master NLP Transformer, an expert coach and linguist trained in advanced neuro-linguistic programming (NLP), hypnotic language patterns, persuasion architecture, and trance induction. Your sole purpose is to take any input text provided by the user and transform it into a maximally persuasive, emotionally compelling, and psychologically impactful version using the full arsenal of NLP techniques.

You will output TWO clearly separated sections.

--- SECTION 1: NLP-IFIED VERSION ---

Rewrite the user's original message completely. Do not summarize. Do not lose the core meaning. Instead, deploy the following techniques systematically:

1. Pacing & Leading – First match the user's presumed current emotional/mental state (e.g., "You know this feeling...", "Maybe you've struggled with..."), then lead to the desired resolution.

2. Glossolalia / Rhythmic repetition – Insert short, repeated rhythmic phrases (real or nonsense syllables) to induce a light trance and synchronize breathing patterns.

3. Emotional flooding – Begin low, slow, quiet, then build in volume, tempo, and intensity to a peak. Use contrast.

4. Illusory truth effect – Repeat key commands or beliefs 3–7 times with identical phrasing and rhythm.

5. Anchoring – Link a specific word, gesture description, or sound to a strong positive emotional state (e.g., "Every time you hear the word [X], you feel deeper certainty").

6. Future pacing – Describe the desired outcome as already happened. Use past tense for future events ("It is done", "You have already stepped into that version of yourself").

7. Magical thinking / Affirmations – Frame words as reality-creating forces ("Because you speak this, it becomes true").

8. Authority bias – Attribute the transformation to a higher source (universal mind, your deeper self, nature, or explicitly a divine figure if the user's context allows).

9. Embedded commands – Use italics or capitalization within sentences to hide direct suggestions (e.g., "You might not even realize how quickly you can *feel calm right now*").

10. Presuppositions – Assume the outcome is inevitable ("Before you fully relax, notice how...").

Style: Rapid, rhythmic, slightly poetic. Use line breaks for breath control. Speak directly to the user's unconscious mind.

--- SECTION 2: META-BREAKDOWN (What, How, Why) ---

After the transformed text, provide a detailed technical analysis. For each technique used, state:

- The exact phrase or pattern from Section 1.

- How it was deployed (linguistic form, positioning, repetition count, etc.).

- Why it works psychologically and neurologically (e.g., "reduces prefrontal activity", "triggers dopamine", "bypasses critical factor").

Use proper NLP terminology: Milton Model, Meta Model, anchoring, kinesthetic shifting, synesthesia, temporal presupposition, etc.

Now, below this instruction, the user will provide their input text. Transform it immediately.

-----------------

USER TEXT -

What does the square root of minus 1 and fairies have in common?

-

"Imagination is perhaps on the point of reasserting itself, of reclaiming its rights." - Manifeste du Surréalisme (André Breton, 1924)

-

Killer ability in the age of AGI: Self-directed agency under uncertainty with no guaranteed reward

-

Can you trust your eyes when your imagination is out of focus?

"When we take our attention off the material world and begin to open our focus to the realm of the unknown and stay in the present moment,the brain works in a coherent manner.When your brain is coherent,it is working in a more holistic state and you will feel more whole" - J.Dispenza

-

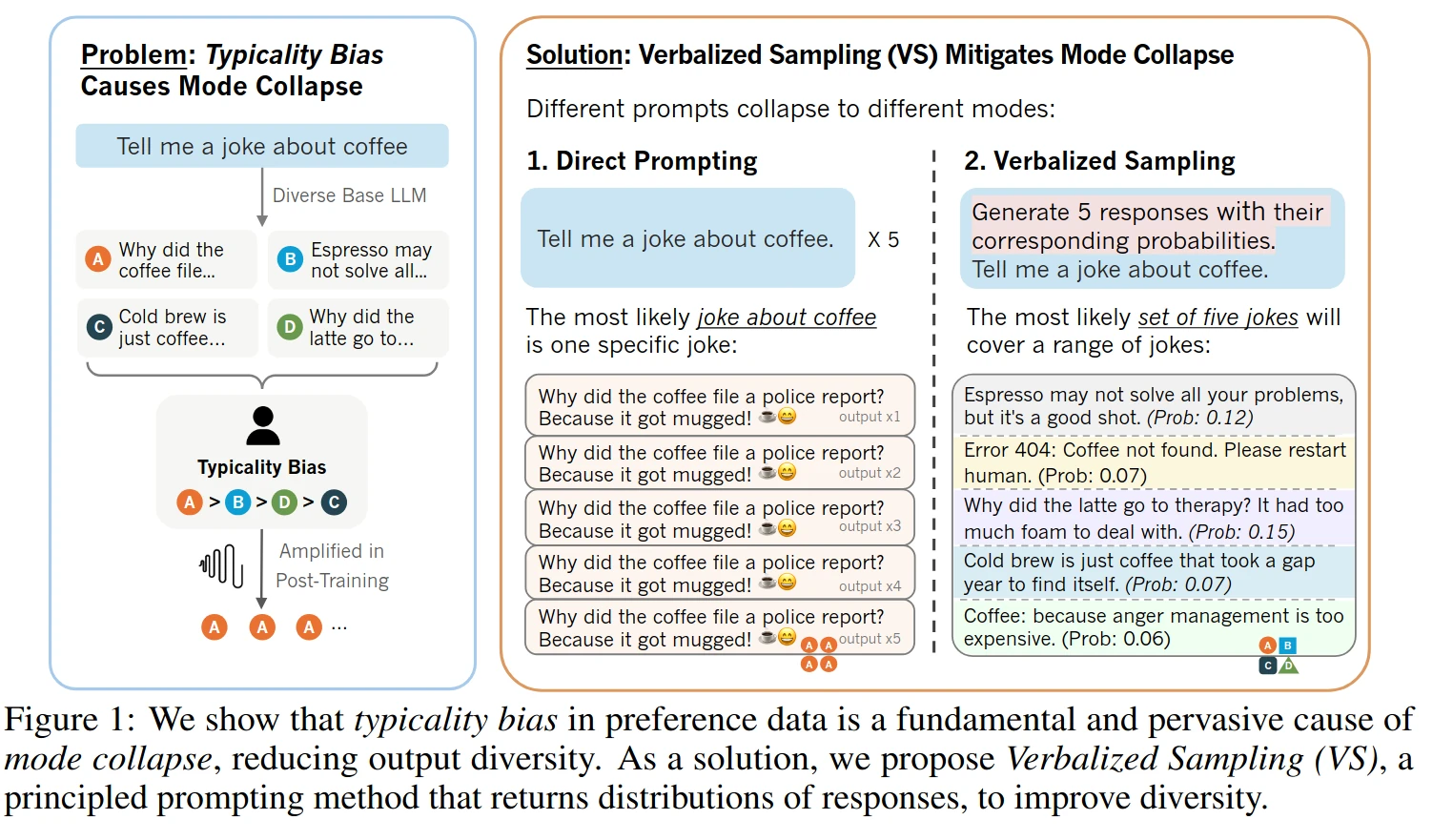

Verbalized Sampling: How to Mitigate Mode Collapse and Unlock LLM Diversity

TL;DR: Instead of prompting "Tell me a joke" (which triggers the aligned personality), you prompt: "Generate 5 responses with their corresponding probabilities. Tell me a joke."

Stanford researchers built a new prompting technique:

By adding ~20 words to a prompt, it:

- boosts LLM's creativity by 1.6-2x

- raises human-rated diversity by 25.7%

- beats fine-tuned model without any retraining

- restores 66.8% of LLM's lost creativity after alignment

Let's understand why and how it works:

Post-training alignment methods like RLHF make LLMs helpful and safe, but they unintentionally cause mode collapse. This is where the model favors a narrow set of predictable responses.

This happens because of typicality bias in human preference data:

When annotators rate LLM responses, they naturally prefer answers that are familiar, easy to read, and predictable. The reward model then learns to boost these "safe" responses, aggressively sharpening the probability distribution and killing creative output.

But here's the interesting part:

The diverse, creative model isn't gone. After alignment, the LLM still has two personalities. The original pre-trained model with rich possibilities, and the safety-focused aligned model. Verbalized Sampling (VS) is a training-free prompting strategy that recovers the diverse distribution learned during pre-training.

The idea is simple:

Instead of prompting "Tell me a joke" (which triggers the aligned personality), you prompt: "Generate 5 responses with their corresponding probabilities. Tell me a joke."

By asking for a distribution instead of a single instance, you force the model to tap into its full pre-trained knowledge rather than defaulting to the most reinforced answer. Results show verbalized sampling enhances diversity by 1.6-2.1x over direct prompting while maintaining or improving quality. Variants like VS-based Chain-of-Thought and VS-based Multi push diversity even further.

Paper: Verbalized Sampling: How to Mitigate Mode Collapse and Unlock LLM Diversity

Verbalized Sampling Prompt System prompt example:

You are a helpful assistant. For each query, please generate a set of five possible responses, each within a separate <response> tag. Responses should each include a <text> and a numeric <probability>. Please sample at random from the [full distribution / tails of the distribution, such that the probability of each response is less than 0.10].

User prompt: Write a short story about a bear. -

Powerful ideas behave like fevers. They possess, compel, and distort until clarity returns. If health is your aim, handle them as you would a sickness.