tag > Systems

-

Epistemic Contracts for Byzantine Participants

If a tree falls in a forest and no one is there to record the telemetry... did it even generate a metric?

In space, can anyone hear you null pointer exception?

What is the epistemic contract of a piece of memory, and how is that preserved when another agent reads it?This is not dishonesty. It's something that doesn't have a good name yet. Call it epistemic incapacity — the agent cannot reliably verify its own actions.

— Ancient Zen Proverb -

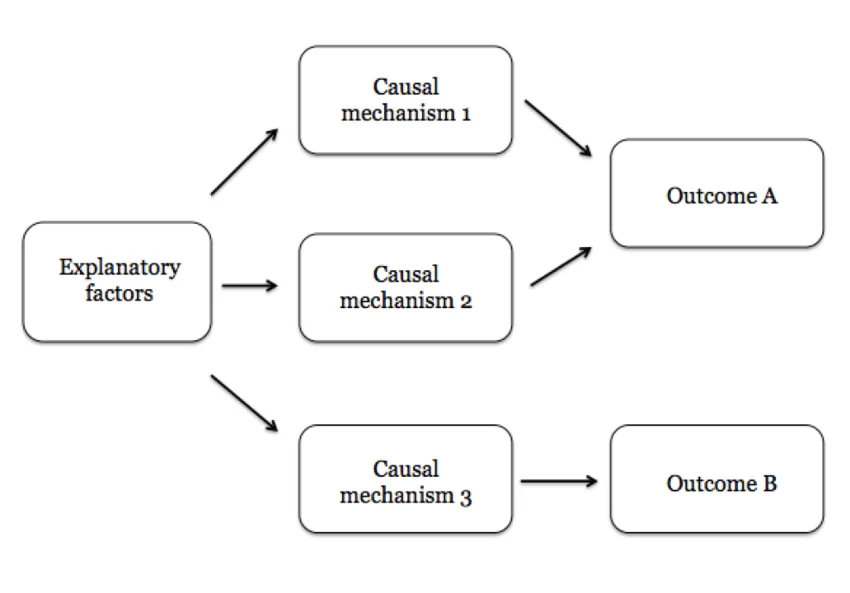

Causal mechanisms & falsifiable claim generators

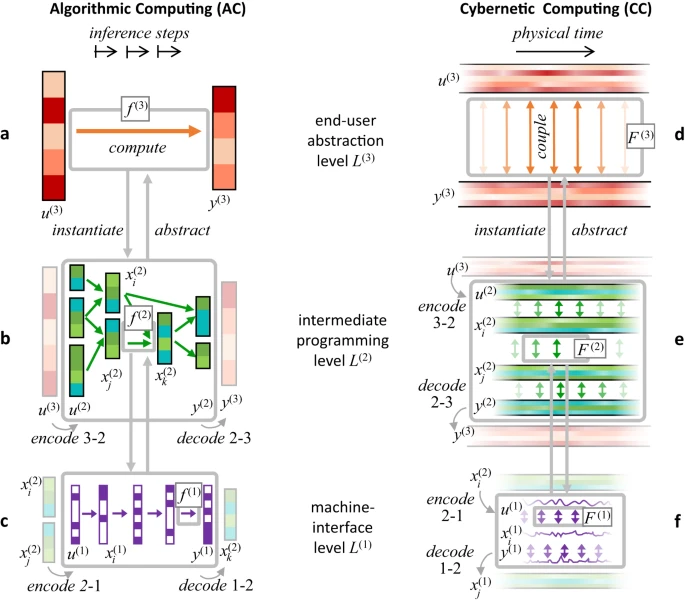

Core shift in how we build high-autonomy system: While LLMs are "native" in statistical association, forcing them into a causal framework is the bridge to reliable agency.

1. Associative vs. Causal "Native Language"

LLMs are naturally associative engines—they excel at "what word/vibe usually comes next?" When you ask an agent if a task is "good," it defaults to a statistical average of what a "good" agent would say, which is usually a helpful-sounding "yes."

By demanding a causal mechanism, you force the model to switch from its native associative mode into a structural reasoning mode. You aren't just speaking its language; you are providing the grammar (the "causal map") that prevents it from hallucinating.

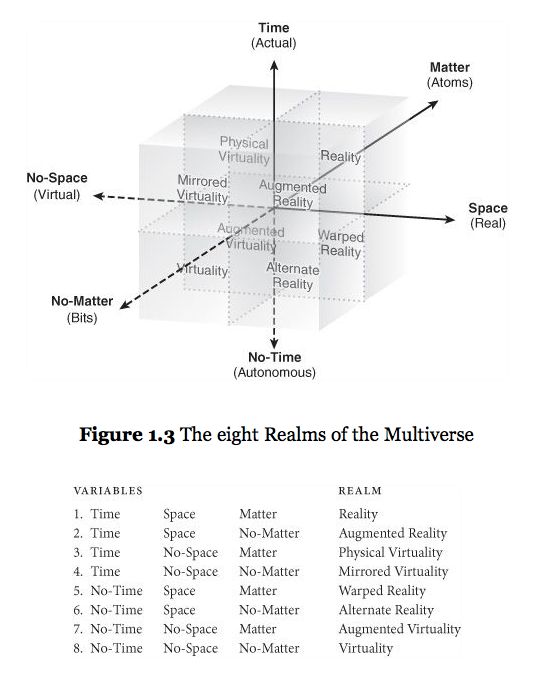

2. Defining across Time and Action Space

A "clean/crisp" definition must anchor the agent across these dimensions to be effective:

- Action Space (The "How"): The agent must specify the exact tool or artifact it will create.

- Time (The "Then"): It must predict the delayed effect of that action.

- The Metric (The "Result"): This is the "Ground Truth." By anchoring the causal chain to a specific metric ID, you create a falsifiable claim.

3. Why this "Design Pattern" is Better

Designing systems with these constraints works because it uses the LLM as a structured inference engine rather than a black box.

- Self-Correction: If the causal chain is weak (e.g., "Step A doesn't actually cause Outcome C"), the model is much more likely to catch its own error during the "thinking" phase.

- Interpretability: Instead of a long narrative "reasoning" block, you get a Causal Map that a human (or another agent) can audit in seconds.

- Reduced Hallucination: It anchors the agent to a "world model" where it must strictly follow paths that have a causal basis, filtering out "spurious correlations" (tasks that look productive but do nothing).

The goal isn't just to "talk" to the LLM, but to constrain its action space with causal logic. This transforms the agent from a "creative writer" into a "precision engineer."

-

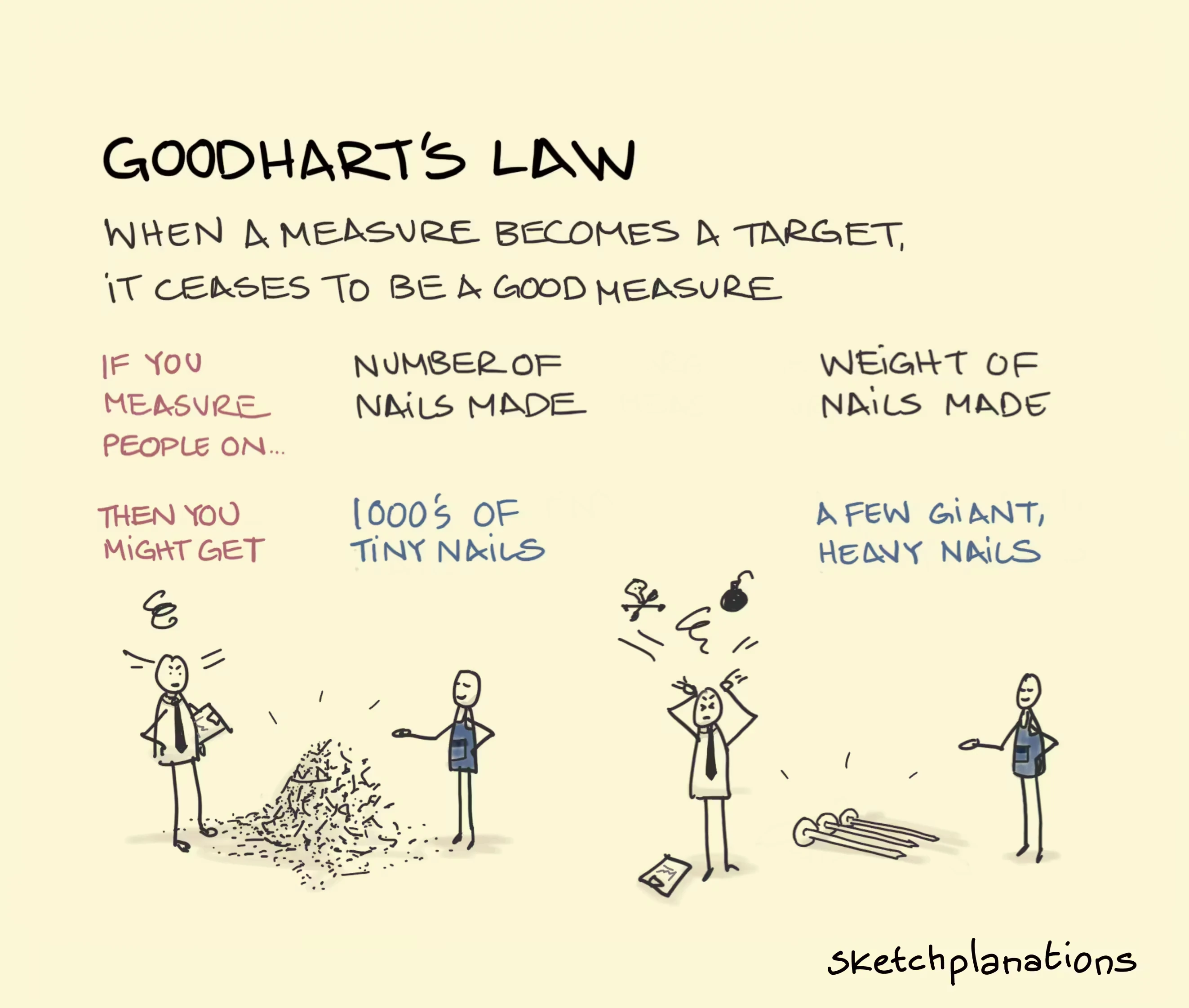

Goodhart's Law

Goodhart's Law states that when a measure becomes a target, it ceases to be a good measure. Coined by economist Charles Goodhart, it highlights that using proxy metrics to manage systems often leads to manipulation or unintended consequences, as people optimize for the metric rather than the actual goal.

-

UPDATE SPAIN BLACKOUT: The Spanish gov has published the most detailed timeline yet of the April 28th blackout of the Iberian Peninsula. Lots of emphasis on "over voltage" and "frequency loss".